By Futurist Thomas Frey

From a shirtless philosopher in 1943 to ChatGPT — the people, the breakthroughs, the winters, and the single idea that refused to die

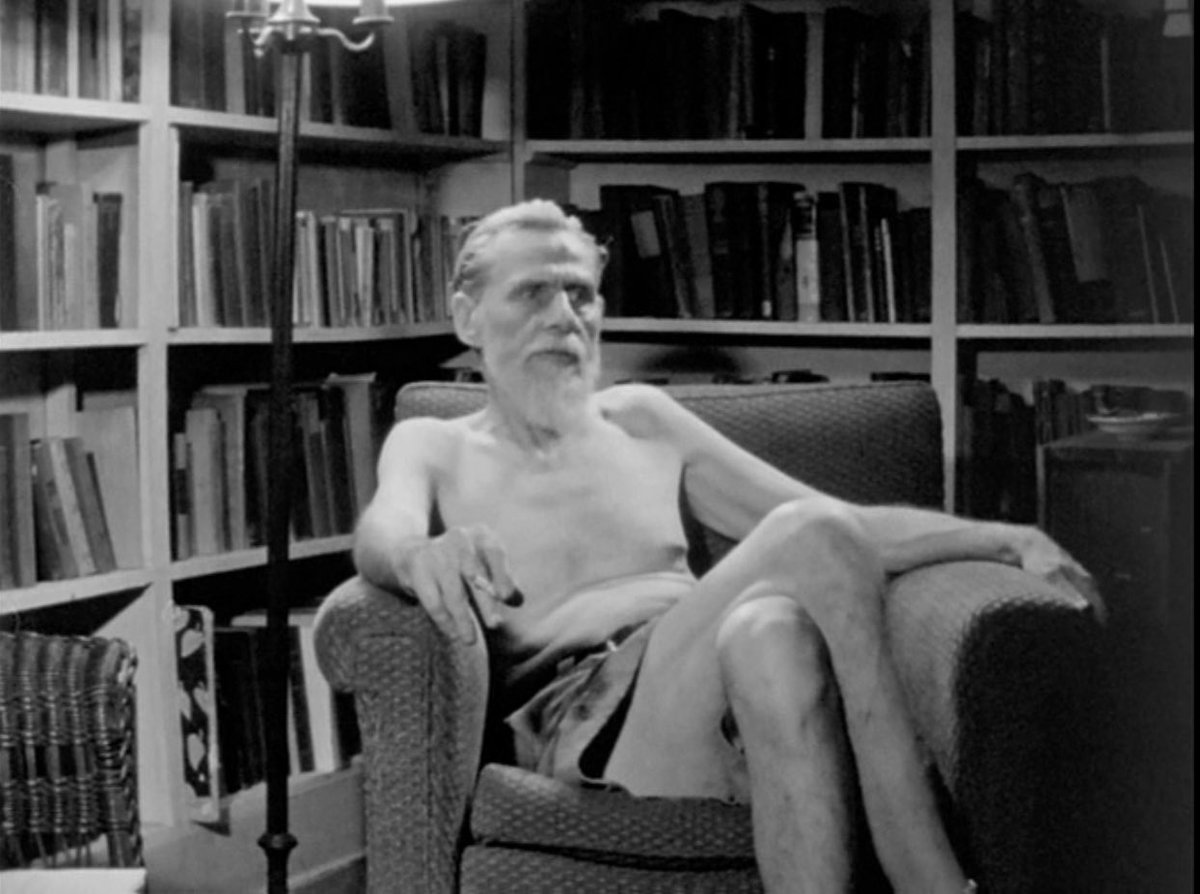

The Man Without a Shirt

In January 2026, Marc Andreessen sat down for an 81-minute podcast conversation on the a16z show and did something most technology commentary doesn’t bother to do: he started at the beginning. Not the beginning of this AI cycle, or large language models, or even deep learning. He started in 1943 — with a paper, a seaside villa, and a neurophysiologist who, in archived footage from 1946, can be seen discussing the future of computing without a shirt on, apparently unbothered by the formality the topic deserved.

That man was Warren McCulloch. His observation — that computers could one day be built on the model of the human brain, using neural networks rather than pure mathematical logic — was the road not taken for most of the next eight decades. Andreessen’s point was simple and important: what feels like an overnight revolution is actually the payoff on an 80-year bet made by a small group of people who spent most of that time being ignored, defunded, and told they were wrong. Understanding that history explains why what’s happening now is different from everything that came before — and why it is probably not going to stop.

Warren McCulloch, the shirtless philosopher who’s thinking on the first artificial neuron proved a radical idea: intelligence could be built, not programmed—setting a path that would take decades to fully unfold.

1943: The Paper That Started Everything

Warren McCulloch — a neurophysiologist — and Walter Pitts — a mathematical prodigy who had run away from home as a teenager to attend university lectures and was, at the time, technically homeless — published “A Logical Calculus of the Ideas Immanent in Nervous Activity” at the University of Chicago. The paper proposed the first mathematical model of a neural network: an artificial neuron that received inputs, applied weighted thresholds, and fired an output based on logical rules.

The idea embedded in it was radical: that the logic of the human brain could be formally described and computationally replicated — not mimicked through clever programming, but actually replicated through interconnected units that learned by adjusting their own weights. The computer industry took a different road: building literal mathematical machines to execute explicit instructions at enormous speed. The neural path would take 80 more years to fully develop. But it was always there, tended by a minority who believed it was the more important direction. John von Neumann cited the paper. Norbert Wiener found it foundational. Marvin Minsky, later one of AI’s central figures, was influenced by McCulloch and built an early neural network in 1951 using 3,000 vacuum tubes to simulate 40 neurons. The seed was planted.

“Can machines think?”—Alan Turing’s one question in 1950 ignited a field that is now reshaping what it means to be human.

1950–1956: Turing’s Question and the Birth of a Field

In 1950, Alan Turing published “Computing Machinery and Intelligence” and opened with the question that became the field’s defining provocation: “Can machines think?” Rather than get lost in philosophy, he proposed a practical test — if a machine could convince a human judge through text conversation alone that it was human, that was sufficient evidence of intelligence worth taking seriously. The Turing Test was born.

Six years later, John McCarthy organized a two-month workshop at Dartmouth with an audacious premise. McCarthy, Marvin Minsky, Nathaniel Rochester of IBM, and Claude Shannon — the father of information theory — claimed that “every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.” It was at Dartmouth in 1956 that McCarthy coined the term “artificial intelligence.” The field had a name, researchers, and ambition. What it lacked for several decades was the ability to deliver.

1957–1969: The Perceptron, the Promise, and the First Winter

In 1957, psychologist Frank Rosenblatt built the Perceptron at Cornell — the first artificial neural network capable of learning from data, updating its own internal connections based on errors. The Navy funded it. The New York Times declared it would one day “walk, talk, see, write, reproduce itself and be conscious of its existence.” By the mid-1960s, Perceptrons were everywhere.

Then Minsky — who had been Rosenblatt’s classmate at the Bronx High School of Science — published “Perceptrons” in 1969 with Seymour Papert. The book proved mathematically that a single-layer network could not solve basic logical functions like XOR. Funding collapsed. Researchers fled to symbolic AI. The first AI Winter arrived. The tragedy, which Minsky later acknowledged, was that the book also noted multi-layer networks could solve XOR — but nobody yet knew how to train them. That problem would take seventeen more years to crack.

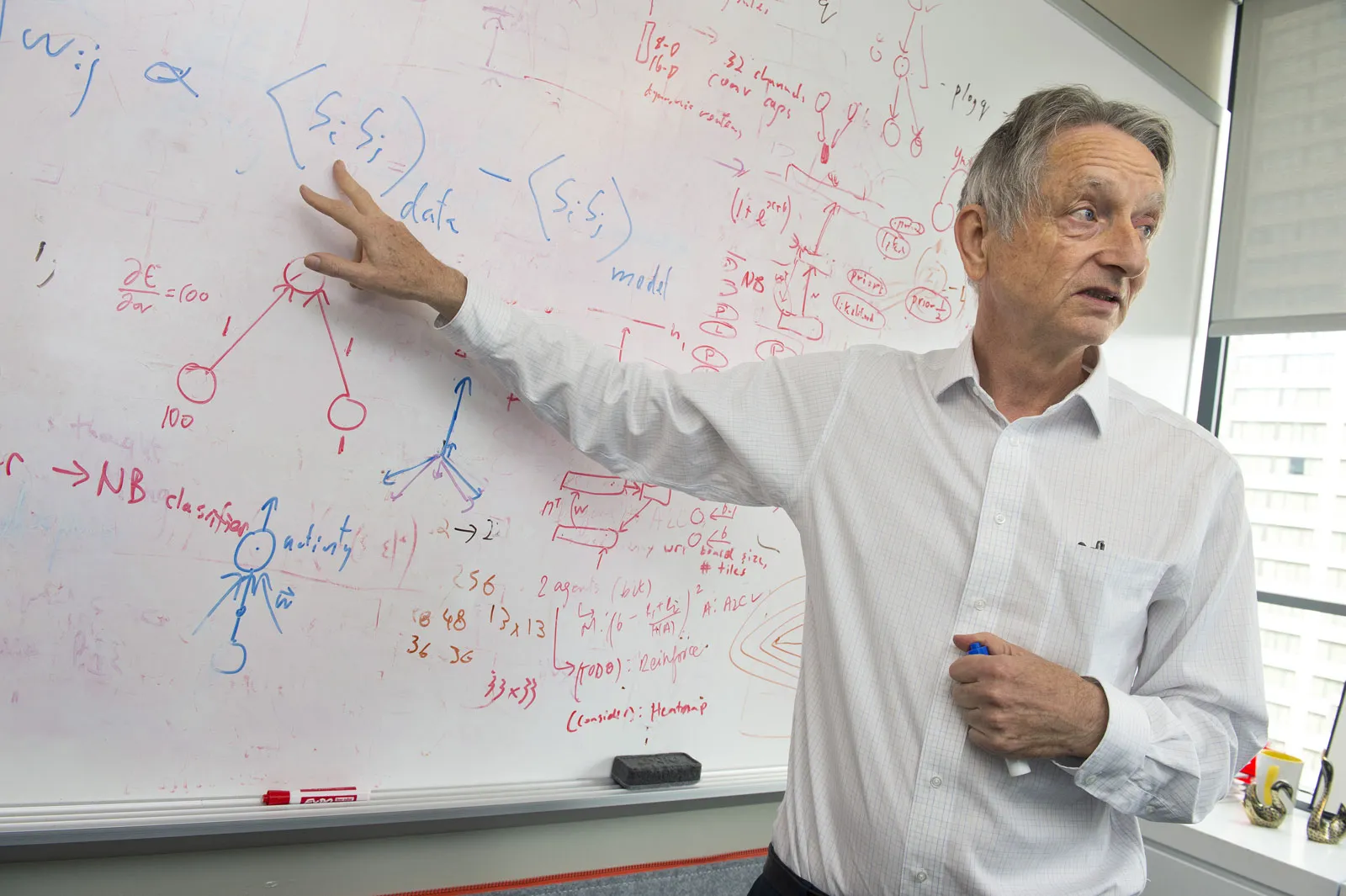

Jeffrey Hinton’s backpropagation didn’t win immediately—but it made deep learning possible, waiting decades for compute to catch up and unlock modern AI.

1986: The Algorithm That Changed Everything

Geoffrey Hinton spent the AI Winter years convinced the brain’s massive parallelism held the key to machine intelligence, continuing to work on neural networks when doing so was roughly equivalent to professional suicide. In 1986, Hinton, David Rumelhart, and Ronald Williams published “Learning Representations by Back-Propagating Errors” — the paper that solved the credit assignment problem and made training multi-layer networks mathematically tractable.

Backpropagation worked by running the network forward, measuring output errors, then propagating that error signal backward through every layer — adjusting each connection proportionally to its contribution to the mistake. Neural networks could now learn all the way down. A second brief spring followed, then a second winter. The 1990s saw expert systems — elaborate rule-based programs — briefly dominate before proving too brittle and expensive to maintain. Funding dried up again. But backpropagation was real. The tool existed. It was waiting for computers fast enough to use it at scale.

The Quiet Years: LeCun, Bengio, and the Believers

Through the 1990s and 2000s, a small community kept the neural network program alive at the margins. Yann LeCun at Bell Labs demonstrated that convolutional neural networks could read handwritten digits reliably enough for real bank check-processing systems — actual commercial deployment, quiet and largely unnoticed. Yoshua Bengio at the University of Montreal published foundational work on language models and distributed word representations — intellectual precursors to the large language models that would arrive two decades later. Hinton, LeCun, and Bengio — who would share the 2024 Nobel Prize in Physics for their contributions to machine learning — continued building theoretical and empirical foundations through years when the dominant sentiment was that deep learning was a dead end. They were wrong about that. The rest of the field was wrong about them.

2012: The Moment That Started the Current Era

In October 2012, Geoffrey Hinton, his student Alex Krizhevsky, and Ilya Sutskever entered the ImageNet visual recognition competition with a deep convolutional neural network called AlexNet. They won — and the margin shocked everyone. AlexNet achieved a top-5 error rate of 15.3 percent. The next best entry was 26.2 percent. That is not incremental improvement. It is a discontinuity — the kind of gap signaling that one team was playing a fundamentally different game. The key was the combination of deep neural network architecture, a massive training dataset, and GPUs repurposed for the parallel matrix mathematics training required. Hinton later summarized it with characteristic dryness: “Ilya thought we should do it, Alex made it work, and I got the Nobel Prize.”

Within months, every major technology company was hiring neural network researchers. Google acquired a startup Hinton had founded. Facebook opened an AI lab. The money that had twice abandoned the field came back — and this time the technology actually worked at real scale on real problems with real economic value. The third spring arrived. Unlike the first two, it did not end.

When Google’s DeepMind mastered Go and defeated Korea’s Lee Sedol, it wasn’t just a game—it was the moment human intuition met a machine that could outthink it.

2017 and Beyond: Transformers, Scale, and the Arrival

In June 2017, eight Google researchers published “Attention Is All You Need” — perhaps the most important research paper in AI history. The transformer architecture replaced sequential recurrent networks with self-attention: a mechanism letting every part of a sequence simultaneously consider every other part, weighted by relevance. Transformers could be trained in parallel, scaled to far larger datasets, and — crucially — their capabilities improved in ways not fully predictable from smaller versions. Scale the model, scale the data, scale the compute: get a qualitatively better system. This scaling law drove everything that followed.

OpenAI’s GPT series demonstrated the trajectory — GPT-1 in 2018, GPT-2 in 2019, GPT-3 in 2020 with 175 billion parameters — each generation capable of things the previous one could not do at all. DeepMind’s AlphaGo in 2016 mastered Go well enough to defeat the world’s best human player. AlphaFold in 2020 solved the protein folding problem that had challenged structural biologists for 50 years. Then on November 30, 2022, OpenAI released ChatGPT. One hundred million users in two months — the fastest adoption of a consumer technology in history. Not because it introduced new capabilities, but because a conversational interface made the full power of a large language model legible to anyone with a browser. Millions of people sat down, typed a sentence, and watched something that felt like thinking happen in response.

The People Who Made It Happen

McCulloch and Pitts provided the foundational concept. Turing provided the philosophical framework and organizing question. McCarthy named the field. Minsky built its institutional architecture, even as his 1969 book nearly killed it. Rosenblatt gave the field its first learning machine. Hinton kept neural networks alive through two winters, solved the training problem, and watched with a mixture of pride and growing concern as the systems he helped build became more capable than he had anticipated. LeCun gave the field convolutional networks and the first proof that learned representations could outperform hand-engineered features at real-world scale. Bengio provided much of the foundational theory and became the field’s most prominent voice on safety. Sutskever co-authored AlexNet, co-founded OpenAI, and drove the GPT series before leaving to found Safe Superintelligence. Ilya’s former mentor Sam Altman made the decision to release ChatGPT publicly — turning an abstract technical debate into a mass cultural experience.

Andreessen’s 80-year framing is not historical interest for its own sake. It is a structural argument about where we are. The technologies that reshape civilizations almost never arrive on the schedule their inventors expect. They require the convergence of the right idea, the right hardware, the right data, and the right moment of public readiness. Usually the idea comes first and waits decades for the rest. What began as a shirtless philosopher’s conversation about building machines on the model of the brain has become the most consequential technological transition of our lifetimes. It took 80 years. It was worth the wait.