We built powerful robots without shared rules. Asimov imagined safeguards—

industry delivered terms of service. One incident could expose a framework that doesn’t exist.

…

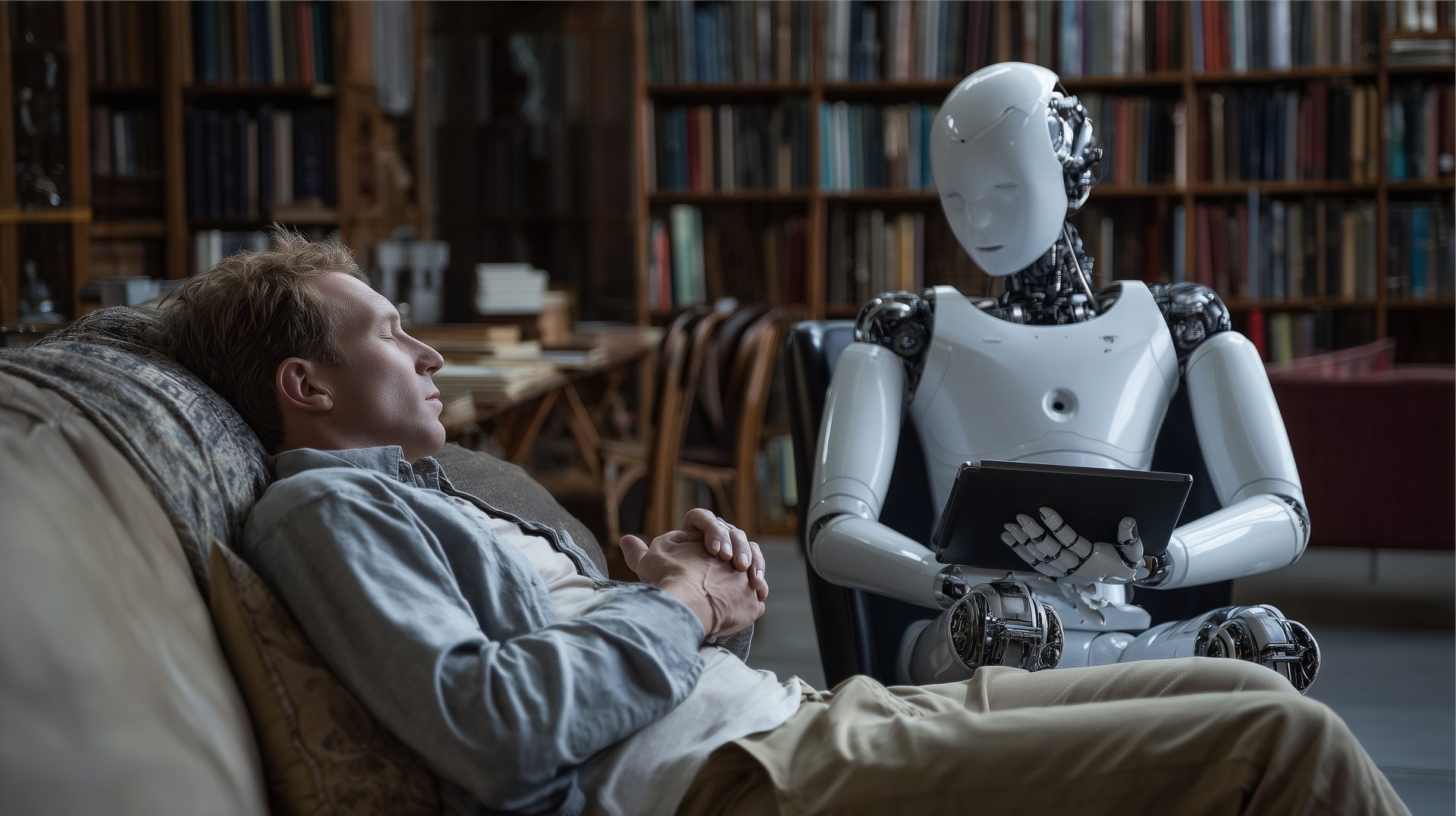

Why the most physically intimate technology in human history has no ethical spine — and why that should terrify everyone

By Futurist Thomas Frey

Part 1 of 4: The Rules We Never Wrote

In 1942, a science fiction writer named Isaac Asimov published a short story called “Runaround.” In it, he introduced three laws governing robot behavior — simple, elegant rules designed to ensure that machines built to serve humanity wouldn’t end up harming it. The First Law: a robot may not injure a human being. The Second: a robot must obey human orders unless those orders conflict with the First Law. The Third: a robot must protect its own existence unless that conflicts with the first two.

Asimov wasn’t writing policy. He was writing fiction. He didn’t expect his three laws to become the actual operating framework for an industry that didn’t yet exist. He expected someone else — engineers, ethicists, governments, the humans who would eventually build these things — to do the serious work when the time came.

That time came. The serious work didn’t.

What we have instead are terms of service agreements. Liability disclaimers. Corporate ethics boards that report to the same executives whose bonuses depend on shipping product. And thousands of companies racing toward a market that is projected to reach half a trillion dollars within a decade, each one moving as fast as it can, each one assuming that someone else is handling the framework question.

Nobody is handling the framework question.

That is what this series is about. Not about whether robots are impressive — they are. Not about whether the technology will transform society — it will. But about the fact that we are building the most physically intimate technology in human history with no shared ethical architecture, no binding international framework, and no serious reckoning with what happens when something goes wrong in a way that can’t be fixed by a software update.

We are one incident away from an industry-wide crisis. And the industry, for the most part, is not discussing it.

What Asimov Actually Understood

Here’s the thing about the Three Laws that most people who cite them miss. Asimov didn’t write them as a solution. He wrote them as a problem.

Almost every story in his robot series is about the ways the Three Laws fail — the edge cases, the interpretations, the unintended consequences of simple rules applied to a complex world. The Laws were a starting point, and his fiction was a decades-long exploration of why starting points are never enough. He was doing the ethical stress-testing in narrative form because he understood that the hard questions don’t answer themselves.

What he saw, eighty years ago, was that the question of robot ethics isn’t primarily a technical question. It’s a values question. What do we want these machines to protect? What do we want them to refuse? Under what circumstances should a robot override a human instruction, and who decides? These are not engineering problems. They are civilization problems — the kind that require deliberate, collective, binding agreement before the machines are in the room, not after.

We have not had that agreement. We have not even seriously begun the conversation that would produce it.

Robots are entering homes and hospitals without enforced safety standards—like cars before seat belts. This time, the risks are far more personal and immediate.

The Industry That Built the Car Without Seat Belts

Let me describe what the current robotics industry actually looks like from the inside, because the gap between the public narrative and the operational reality is significant.

Humanoid robots are no longer a research project. They are a product category. Companies including Boston Dynamics, Figure AI, 1X Technologies, Agility Robotics, Tesla, and Apptronik are developing and in some cases already deploying bipedal robots in commercial and industrial environments. The pace of capability improvement has been startling even to people who have been watching this space for years.

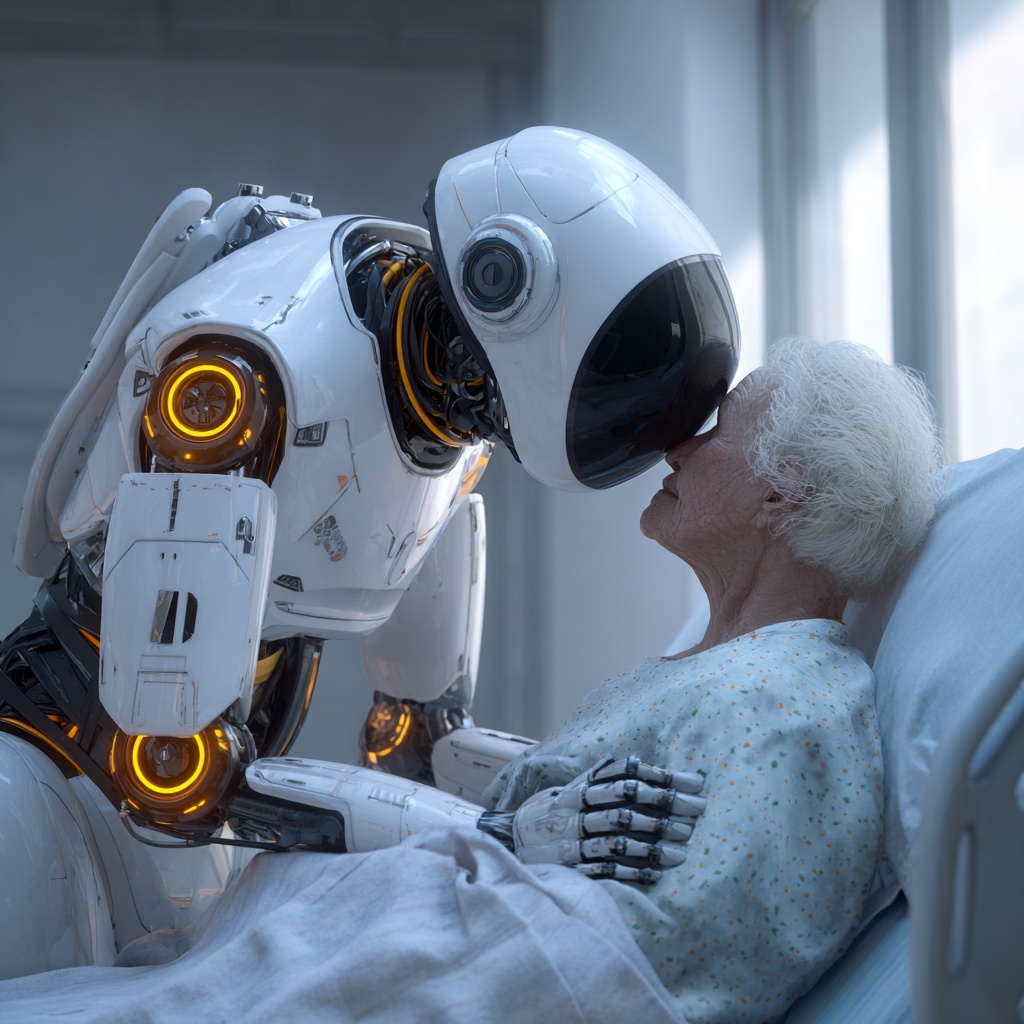

These robots are entering warehouses. They are beginning to enter healthcare settings. They are being positioned for eldercare, for childcare, for domestic assistance in private homes. They will, within a timeframe measured in years not decades, be physically present in the most vulnerable spaces of human life — the nursery, the hospital room, the home of someone who can no longer fully care for themselves.

And the framework governing their behavior in those spaces is: whatever the company that built them decided to put in the software, subject to revision in future updates, governed by the terms of service agreement the purchaser clicked through.

That is the seat belt situation before Ralph Nader. The industry knows the cars are going fast. Nobody has seriously mandated what happens when one crashes.

The automobile industry’s resistance to safety standards killed tens of thousands of people before regulation intervened. But cars, even at their most dangerous, were not physically present in your bedroom. They were not holding your child. They were not making decisions, in real time, about whether to restrain an elderly patient who is trying to stand up.

The robots that are coming will be.

Why This Matters More Than Any Previous Technology

I want to be precise about what makes this different from every other technology governance challenge we’ve faced.

The internet raised serious questions about privacy, misinformation, and manipulation. We largely failed to address those questions at the speed they required, and we are living with the consequences. But the internet’s harms are, for the most part, mediated — they happen through screens, through information, through influence. They are real and serious. They are not physical.

AI governance raises questions about bias, accountability, and autonomous decision-making that we are only beginning to grapple with. But AI, at its current stage of deployment, operates primarily in the domains of language and data. When it fails, the failure is usually a wrong answer, a biased output, a bad recommendation.

When a robot fails, the failure can be a broken bone. A fall down a staircase. A restraint applied with too much force. A navigation error in a room with a sleeping infant.

The physicality of robotics is what makes the governance question categorically different. Physical presence in human spaces, physical interaction with human bodies, physical consequences for physical failures — these are not comparable to any previous technology category. And the spaces where these robots are being deployed are specifically the spaces where the humans present are most vulnerable: the elderly, the sick, the very young, and the people who care for them.

We are building intimate technology. We have no intimate ethics.

One visible robot failure could trigger backlash against the entire industry. Without real safety frameworks, trust is fragile—and one incident could set progress back years.

The Stakes Nobody Is Naming

Here is what the robotics industry’s current trajectory leads to, absent intervention.

A serious incident will occur. It may be a care robot that injures a patient. It may be a domestic robot that fails in a way that harms a child. It may be something that happens on video in a way that is impossible to contextualize away. When it does, the public response will not be calibrated to the specific failure of the specific product from the specific company. It will be a response to robots. To the category. To the idea.

The aviation industry learned this the hard way. A single crash, handled badly, can ground an entire fleet and shake an industry’s foundations for years. The difference is that aviation has always had a robust, internationally coordinated, independently enforced safety framework. When a crash happens, there is an investigation, a finding, a corrective action, and a binding requirement that every operator implement it.

Robotics has none of that. It has press releases and pivot announcements.

The industry is fragile in the way that any industry is fragile when it has built market value on public trust without building the institutional architecture that justifies that trust. One incident. One video. One family’s story told on the front page. That’s the distance between where we are today and a crisis that sets the entire category back a decade.

Asimov saw this coming in 1942. He tried to tell us.

We kept the footnote and ignored the spirit.

Next: The Diaper Test — The measure of a robot isn’t what it can do in a warehouse. It’s whether you’d trust it alone with the people you love most. The industry is optimizing for the wrong problem.

Related Reading

Isaac Asimov’s Three Laws of Robotics: Still the Best Framework We Have

IEEE Spectrum — A serious technical examination of why Asimov’s fictional laws remain more ethically sophisticated than most real-world robotics governance frameworks, and what an actual implementation would require

The Coming Collision Between Robots and Trust

Brookings Institution — How the gap between robotics capability and robotics governance is widening, and why the window for proactive framework-building is narrowing faster than most policymakers realize

Who Is Responsible When a Robot Causes Harm?

Harvard Business Review — The current state of liability law as applied to autonomous physical systems — and why the existing legal architecture is inadequate for the category of harm that humanoid robotics will produce