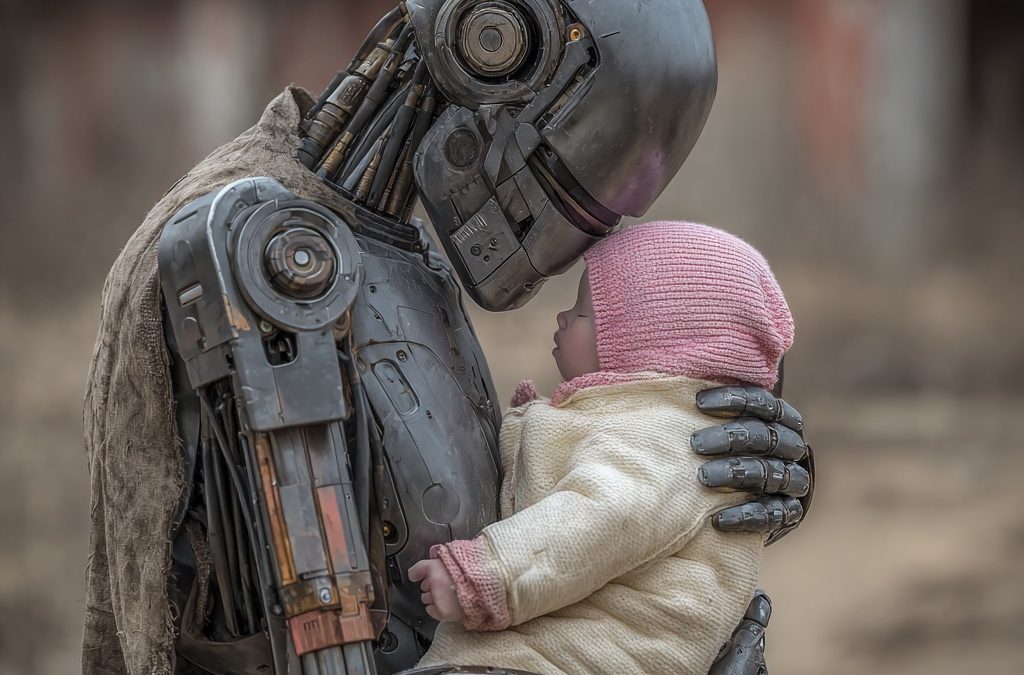

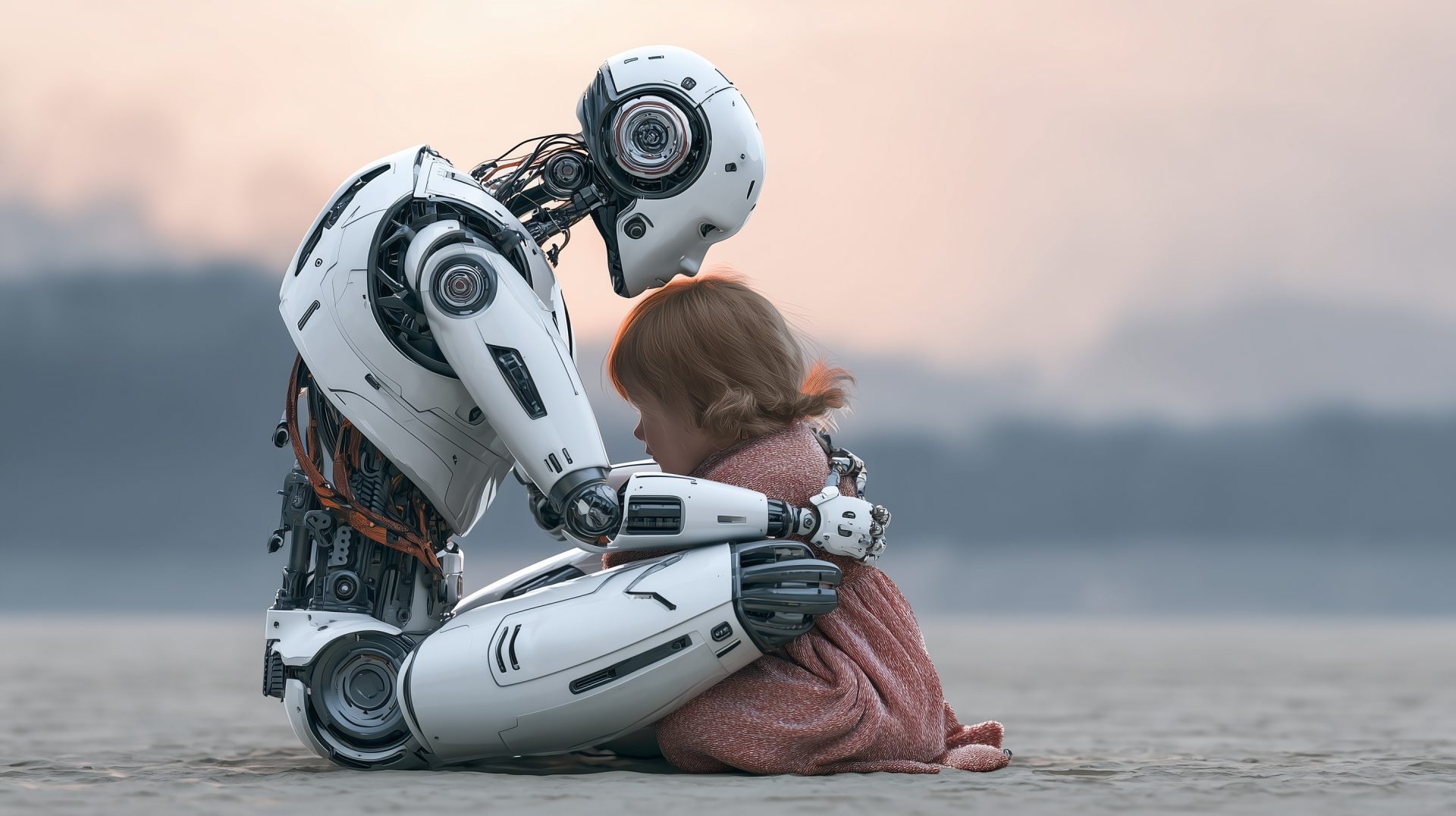

The real measure of a robot has never been what it can do in a warehouse. It’s whether you’d trust it alone with the people you love most.

By Futurist Thomas Frey

Part 2 of 4: The Wrong Problem

It was 2 in the morning, and Sarah hadn’t slept more than three hours in as many days.

Her two-month-old, Leo, had been crying for what felt like hours. She placed him on the changing table, peeled back the diaper, and watched the situation spiral. Leo kicked, squirmed, and managed to make the mess considerably worse. It spread across the changing table, onto Sarah’s shirt, and across the floor. She was exhausted, overwhelmed, and running out of hands.

I told this story in a column on FuturistSpeaker.com earlier this year, posing what I called the Turing Test for humanoid robots. The original Turing Test — Alan Turing’s 1950 benchmark for machine intelligence — asked whether a machine could hold a conversation indistinguishable from a human. A meaningful threshold, but an intellectual one. What I proposed was a different kind of threshold entirely: not can the machine think like a human, but can it act like one in the moments of genuine, physical, emotionally loaded caregiving that define what it means to care for another person?

The test: Can a humanoid robot change a dirty diaper at 2 in the morning — gently, competently, calmly, without injuring an infant or escalating the chaos — in a way that a frazzled, sleep-deprived parent would trust it to do alone?

I called it the Diaper Test. And the more I’ve thought about it since, the more I believe it is not just a benchmark for robotic capability. It is the benchmark for whether this industry has earned the right to be where it’s heading.

Why Turing Got It Half Right

Turing’s original test was revolutionary because it shifted the question from internal mechanism to observable behavior. We don’t need to know how a machine thinks, he argued. We just need to know whether its behavior is indistinguishable from thinking. That reframing changed everything about how we approach artificial intelligence.

But Turing was working in the realm of language and cognition. His test lives in conversation — in text or speech, in the back-and-forth of questions and answers. When AI systems pass versions of the Turing Test today, they do so through words. They can argue, persuade, explain, and comfort in language that sounds deeply human.

What they cannot yet do is walk into a dark nursery at two in the morning, pick up a squirming, crying infant with the precise force required to be secure without being harmful, clean a chaotic mess while keeping the baby calm, and set a clean, soothed child back down — all without any of the dozens of micro-adjustments going wrong in ways that a tired human parent would catch on instinct.

That is a different kind of test. It requires fine motor precision at the level of handling a fragile, uncooperative living being. It requires real-time adaptability to behavior that is entirely unpredictable — a baby who kicks at exactly the wrong moment, who grabs at something they shouldn’t, who startles in a direction the robot didn’t anticipate. It requires the ability to soothe and calm through touch, sound, and movement — the physical language of comfort that parents develop over weeks of learning their specific child’s specific responses.

And it requires judgment. Not the computational kind. The kind that knows the difference between a cry of distress and a cry of mild frustration, that understands when to persist and when to pause, that can read a situation and decide what the right action is when the right action isn’t in any manual.

Robotics measures performance in controlled tasks. Real trust depends on unpredictable moments—where judgment matters more than benchmarks. That’s the gap the industry hasn’t closed.

What the Industry Is Actually Building For

Here is the uncomfortable question. Walk through any major robotics demonstration right now, and count the benchmarks being celebrated.

Payload capacity. Locomotion stability on uneven terrain. Object manipulation success rates in controlled environments. Battery endurance. Processing latency. Navigation accuracy in mapped spaces. The ability to fold laundry, operate a drill press, or sort packages in a fulfillment center.

These are real engineering achievements. They matter. But none of them answer the question that the Diaper Test asks.

What does the robot do when something happens that wasn’t in the training data? When the baby rolls in an unexpected direction at exactly the wrong moment? When the elderly patient becomes frightened and starts to resist? When the child runs in front of the machine and the navigation system has 200 milliseconds to decide what to do in a situation where 200 milliseconds is the entire margin?

These are not exotic edge cases. They are the routine texture of caring for vulnerable human beings. Any parent, any nurse, any home health aide will tell you that the job is made almost entirely of unexpected situations. Moments where the correct response requires not just processing speed but something that functions like wisdom — the ability to weigh competing obligations in real time when the stakes are irreversibly human.

The industry’s benchmarks measure performance in expected conditions. The Diaper Test measures readiness for unexpected ones. We have been conflating the two as though they were the same problem. They are not.

The Intimacy Gap

In the original FuturistSpeaker.com column, I argued that passing the Diaper Test would be a watershed moment — the robotic equivalent of the iPhone, the kind of breakthrough that doesn’t just sell products but reshapes what people believe is possible. I stand by that. The moment a robot can genuinely handle that 2am scenario — not in a lab, not in a demo, but in a real home with a real exhausted parent watching — the consumer robotics market will never be the same.

But here, in the context of this series, I want to press on a harder version of the same argument.

The spaces where humanoid robots are being positioned — homes, hospitals, care facilities, nurseries — are not like warehouses. Warehouses are designed environments, controlled and predictable, built around machine-compatible workflows. A home is chaos organized by love. A hospital room is fear and vulnerability and the constant possibility of things going wrong in ways that matter enormously. A nursery is a space where the margin for error is measured in different units entirely.

The intimacy of these spaces is what makes the Diaper Test the right benchmark. Not because changing diapers is the most complex task imaginable, but because it concentrates, in one scenario, all of the things that make care work genuinely hard: physical delicacy, unpredictable human behavior, emotional stakes, and the irreversibility of certain kinds of failure.

A robot that fails a warehouse sorting task costs the company time and money. A robot that fails the Diaper Test costs something that cannot be quantified and cannot be patched in the next update.

The Experts Nobody Is Asking

In the original column I wrote about the societal transformations that a diaper-changing robot would unleash — the potential to ease the burden on young families, support aging populations, rebalance caregiving responsibilities, and give parents back the time and energy they need to actually be present with their children. I believe all of that is true.

But there is a community of people who understand what it would actually take to get there — and they are almost entirely absent from the conversations shaping this industry.

Pediatric nurses. Neonatal intensive care unit staff. Hospice workers. Home health aides who spend twelve-hour shifts with people who have late-stage dementia. Foster care workers. These people know, in their bodies and their years of experience, what genuine care requires. Ask any one of them whether the robots they have seen demonstrated are ready to be trusted alone with the people they serve, and their answers would be more honest, more specific, and more useful than most product roadmaps currently circulating in the robotics investment community.

They should be in the room where these products are being designed. They should be setting the benchmarks. They should be the ones deciding when the test has been passed.

They are not. Not yet. And that gap between the people who build care robots and the people who actually provide care is one of the most dangerous gaps in the industry.

The real benchmark isn’t demos—it’s trust. Until a parent would leave a child alone with a robot, the technology isn’t ready.

What Passing Looks Like

So what would it actually mean to pass the Diaper Test?

It would mean a robot that a parent who has seen it perform — not in a demo, but in the real conditions of their real home with their real child — would genuinely trust to be left alone. That trusts its physical judgment. That believes it will handle the unexpected correctly. That has no hesitation about leaving the room.

That bar has never been met. The industry is not close to meeting it. And the path to meeting it does not run through better warehouse benchmarks or more impressive locomotion demos.

It runs through a completely different orientation to the design problem — one that starts not with what the robot can do in optimal conditions but with what it must reliably do in the hardest ones.

We are the last generation without advanced robots everywhere. Our children will grow up as robot natives, for whom humanoid helpers are simply part of the world. For that future to be the one I described in my original column — the one where robots genuinely extend human capability and human care — the industry needs to prove it can pass the test that actually matters.

Not the benchmark that impresses investors. The one that earns the trust of a sleep-deprived parent at two in the morning.

That test is still waiting.

Next: One Incident Away — Trust in robots will not be built incrementally. But it can be destroyed in a single afternoon. The military robotics programs running parallel to care robots are the industry’s most dangerous open secret.

Related Reading

The Turing Test for Humanoid Robots: Changing an Infant’s Dirty Diaper

FuturistSpeaker.com — The original column that introduced the Diaper Test as the real benchmark for humanoid robot capability — and explored the societal transformations that would follow a robot that could genuinely pass it

What Robots Still Can’t Do: The Limits of Machine Judgment in Human Environments

MIT Technology Review — A rigorous technical examination of where the capability frontier in robotics actually sits, and why the gap between benchmark performance and real-world trustworthiness in complex human environments is wider than most product timelines acknowledge

The Invisible Experts: Why Care Workers Should Be Shaping Robot Design

Harvard Business Review — The case for putting nurses, home health aides, and childcare professionals at the center of the robotics design process, rather than treating them as end users to be trained on finished products